Enterprise AI keeps failing. Not because the models are bad. But because the architecture around them is missing something fundamental/

I've spent the last two years talking to CFOs, enterprise architects, and operations leaders about why their AI deployments aren't working.

Not why they failed in the technical sense; the models work, the APIs connect, the dashboards render. They fail in the operational sense. They run for six months, generate interesting outputs, and then quietly get deprioritised when the champion moves to another role or the budget cycle resets. The insights were real. The ROI never materialised. Nobody knows quite why.

After enough of these conversations, I stopped believing it was a capability problem.

The models are good enough. They've been good enough for a while. The problem is something else, and I want to try to articulate it as precisely as I can because I think the industry is systematically misdiagnosing it, and that misdiagnosis is why we keep building more capable systems that produce less reliable outcomes.

The semiconductor parallel

For most of the twentieth century, computing got faster by shrinking transistors. Moore's Law held because the problem was simple: smaller transistors, faster switching, more compute per unit area. The industry optimised relentlessly on one variable and it worked, until it didn't.

The transistor hit physical limits. The response from most of the industry was to keep pushing: smaller nodes, more exotic materials, higher frequencies. The response from the people who understood the system was different: the constraint has shifted. You're no longer optimising the right variable. The bottleneck isn't transistor speed anymore. It's memory bandwidth, power architecture, and the efficiency of the system as a whole. Optimising compute while ignoring the memory and power architecture produces faster processors that deliver diminishing real-world returns because the rest of the system can't keep up.

The AI industry is making the same mistake right now.

The dominant narrative is that enterprise AI fails because the models aren't capable enough yet. The reasoning goes: current LLMs hallucinate, they lose context over long conversations, they can't reason reliably over complex multi-step problems. The solution is more parameters, longer context windows, better reasoning architectures. When the models are good enough, enterprise AI will work.

This is the transistor-shrinking response. Keep optimising the one variable that's easiest to measure while the actual bottleneck sits elsewhere in the system.

The models are already capable enough to deliver significant enterprise value in most of the domains people are trying to deploy them. The bottleneck isn't capability. It's the absence of something that capability alone cannot provide: durable, governed, provenance-grounded memory.

What I mean by memory

Not storage. Not retrieval. Not a vector database that returns semantically similar chunks when you query it.

I mean the thing that makes intelligence continuous rather than episodic. The thing that connects what happened yesterday to what's happening now to what should happen next. The thing that preserves not just the outcome of a decision but the reasoning context behind it: why it was made, what conditions were in force at the time, what was tried before, what was learned.

Biological intelligence doesn't work by storing everything and retrieving it on demand. It works by maintaining a continuously updated model of the world, consolidating experience into stable knowledge over time, and using that consolidated knowledge to predict and evaluate new inputs before expending significant resources on them.

The brain doesn't read every incoming signal carefully. It predicts what it expects to receive and only escalates attention when reality deviates from prediction. The deviation, the surprise, is what triggers deep processing. Everything that matches expectation is handled with minimal compute. Everything that doesn't match expectation gets investigated.

This is not how enterprise AI systems are currently built. They are built as retrieval systems: you ask a question, they find relevant information, they generate an answer. Each interaction is essentially stateless. The system doesn't remember that it answered a similar question yesterday and got it wrong. It doesn't remember that this vendor has a history of anomalous behaviour. It doesn't remember that this payment pattern deviates from what it has seen before. It processes each input as if it arrived in a vacuum.

That's not intelligence. That's expensive retrieval with a natural language interface.

Why this is an architecture problem

The reason enterprise AI deployments fail isn't that the model gave a wrong answer. It's that the wrong answer had no provenance — no traceable reasoning chain, no connection to the specific context that made it wrong, no mechanism to prevent the same wrong answer tomorrow. And the right answers have the same problem in reverse — they worked, but nobody knows exactly why, so they can't be reliably reproduced or systematically improved.

Without memory, every interaction is new. The system can't learn from its own history inside a specific deployment. It can't accumulate the contextual knowledge that makes outputs more reliable over time. It can't distinguish between "this looks anomalous because I haven't seen it before" and "this looks anomalous because it deviates from the established pattern for this specific vendor in this specific context." The difference between those two things is the difference between a false positive and a real finding — and without memory, you can't know which you have.

This creates a specific and consistent failure mode: the pilot works, the demo is impressive, and then in production the false positive rate is too high, or the outputs aren't auditable enough for compliance, or the system keeps surfacing things the finance team already knew about and ignoring things they didn't. The model is performing exactly as designed. The architecture around it is missing the substrate that would make the performance operationally useful.

What the research actually suggests

This isn't an original observation. It's implicit in work that has been sitting in cognitive science and neuroscience for decades and hasn't been adequately translated into AI system design.

James McClelland's Complementary Learning Systems theory describes how biological intelligence uses two distinct memory systems; one fast and episodic, one slow and integrative that operate in complementary ways to balance rapid learning with stable retention. The episodic system captures new experiences quickly and specifically. The semantic system consolidates patterns from those experiences gradually into generalised knowledge. Together they solve a problem that a single system can't solve alone: how to learn something new without overwriting what you already know.

Karl Friston's Free Energy Principle formalises the prediction-and-surprise model I described earlier. Intelligent systems minimise surprise by maintaining generative models of their environment and updating those models when predictions fail. The important implication is that the goal of intelligence isn't to process all inputs carefully: it's to build models good enough that most inputs don't require careful processing at all.

Jeff Hawkins' Thousand Brains theory identifies the neocortex as a prediction machine built from thousands of reference frame models that vote on the most likely interpretation of sensory input. The insight is that intelligence is distributed, parallel, and model-based rather than sequential and reactive.

What strikes me about all three frameworks is the same thing: they all describe intelligence as fundamentally dependent on memory architecture, not processing power. The brain is not fast because neurons are fast. It's fast because it has built such good models of its environment that most of what it encounters requires almost no processing at all. The surprise signal, the prediction error, is what drives attention, learning, and action. Everything else is prediction confirmed.

But here's the thing none of these frameworks fully addresses, and where I think the most important engineering insight lives: the neocortex is not the whole brain. It's approximately 70% of it by volume, and it's extraordinary but it receives pre-filtered, heavily processed input from the thalamus before it ever engages with a signal. The hippocampus handles episodic encoding before consolidation into cortical structures. The basal ganglia manage action selection separately from cortical reasoning. The amygdala flags salience before the neocortex processes content.

The neocortex is powerful precisely because it doesn't have to deal with everything. It sits downstream of a sophisticated preprocessing architecture that decides what it gets to think about, when, and with what priority.

Building enterprise AI around a powerful language model alone is building a neocortex without the rest of the brain. The model is capable. The architecture that would make that capability operationally reliable is missing.

The survivorship bias problem

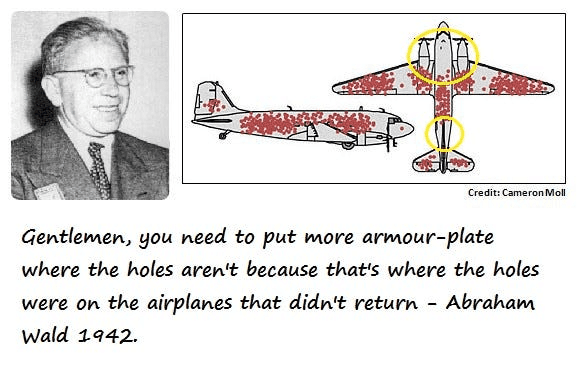

In World War II, the statistician Abraham Wald was asked to help the US military decide where to add armour to their aircraft. Engineers had analysed returning planes and mapped where the bullet holes concentrated: wings, fuselage, tail. The obvious conclusion: reinforce those areas.

Wald pointed out that they were looking at the wrong data. The planes that returned were the ones that had been hit in those areas and survived. The planes that didn't return, the ones that had been shot down were missing from the analysis entirely. The bullet holes on returning planes showed where a plane could be hit and still fly. The places with no bullet holes on returning planes were the places where a hit was fatal.

The recommendation was to reinforce the areas that showed no damage on the planes that came back.

I keep thinking about this when I look at how the enterprise AI industry evaluates its own performance. We measure the deployments that produced outputs. We analyse the pilots that generated findings. We optimise the systems that are running.

The failures are mostly invisible. The pilots that ran for six months and got quietly deprioritised don't generate case studies. The deployments that produced high false positive rates and lost their champions don't get written up. The AI systems that were technically impressive and operationally useless don't appear in the benchmark comparisons.

The model builders see the capability working correctly. The enterprise deployers see the operational result not meeting expectations. Both are looking at returning planes. Neither is looking at what's missing from the analysis: the memory architecture that would have made the capability land reliably in the operational context.

We keep reinforcing the wings because the wings are where we can see the bullet holes. The fatal hits are somewhere we're not looking.

What this creates in practice

Enterprises don't fail because they lack data. They have more data than they can process. They fail because they lack the architecture to preserve the reasoning context behind that data: what it meant, why it was acted on, what was learned, what changed as a result.

This creates a specific class of failures I've started thinking of as institutional amnesia. The enterprise records transactions but not the reasoning behind them. It stores documents but not the relationships between them. It captures outcomes but not the causal chains that produced them. Errors repeat because the context that would identify them as errors isn't preserved. Knowledge walks out the door when people leave. Decisions get made without access to the institutional context that would make them better decisions.

An AI system deployed on top of this environment inherits all of its amnesia. It processes whatever data it can access, generates outputs based on that processing, and has no mechanism to learn from the gap between its outputs and the institutional reality it's operating in. The model doesn't know what it doesn't know. And crucially; neither does the enterprise it's trying to serve.

This is not a model problem. You can't solve it with a better model. You can only solve it by building the memory substrate that gives the model the context it needs to be operationally useful: the equivalent of the thalamic filtering, the hippocampal encoding, the basal ganglia governance, all the architecture that makes the neocortex functional rather than just capable.

What I think needs to change

The question I keep returning to is: what would it look like to build enterprise AI the way the brain actually works, rather than the way we imagine it should work?

Not a single powerful model that handles everything. The brain doesn't work that way. It's a hierarchy of specialised systems with different memory timescales, different processing depths, and different action authorities, coordinated by a governance architecture that decides what gets escalated and what gets handled automatically.

The processing power is not the constraint. The memory substrate is the constraint. Build the memory substrate first: episodic, semantic, provenance-grounded, governed, and the processing capability becomes operationally useful. Skip the memory substrate and keep adding processing capability, and you keep getting the same failure mode with a more expensive system.

The semiconductor industry learned this lesson the hard way. The companies that kept shrinking transistors hit the wall. The companies that redesigned the architecture: memory bandwidth, power efficiency, system-level co-design,... are the ones defining the next decade of computing.

Enterprise AI is at the same inflection point. The transistor-shrinking phase is ending. The architecture phase is beginning.

The question I'm sitting with

If you accept that the memory substrate is the missing layer; that the bottleneck is not capability but durable, governed, provenance-grounded context then the interesting question becomes: what does that substrate actually look like in practice, and what's the right entry point for proving it works?

I have a view on this. I'm working on it. But I'm more interested in the question than the answer right now, because I think the people who have thought most carefully about this problem are not all building companies or writing papers, some of them are enterprise architects who have watched enough deployments fail to have a very precise sense of where the failure actually happens.